When the digital humanities specialist position was first designed, it was meant to facilitate individual research agendas using off-the-shelf and custom-built digital tools and objects. As time went by, it also began to include the assessment and development of centralized resources that could be used in a variety of digital humanities projects. Listed below are a few of the tools and objects that have been used or are currently in use for supported projects here at Stanford.

When the digital humanities specialist position was first designed, it was meant to facilitate individual research agendas using off-the-shelf and custom-built digital tools and objects. As time went by, it also began to include the assessment and development of centralized resources that could be used in a variety of digital humanities projects. Listed below are a few of the tools and objects that have been used or are currently in use for supported projects here at Stanford.

MySQL

Traditional relational databases are still the basis for so much data analysis and web content, and of these the most commonly used database for digital humanities work, in my experience, is MySQL. Changes are afoot, though, with the growing popularity of document datastores, NoSQL solutions and the growing prominence of spatially enabled databases like PostGIS (which runs on Postgres) but all of these still rely on fundamental concepts of query language and data collation that can be acquired through a familiarity with MySQL. I still do much of my data analysis with query language and scripted batch queries. It doesn’t make for attractive screenshots but oftentimes scholars need traditional statistical analysis of datasets and models.

PHP

I had no choice with PHP, it came as part of the WAMP stack and a very good friend of mine knew how to use it. I have a feeling this is the way it works with all the scripting languages. I used PHP extensively to build data services for all my Flex apps before retiring from the AS3 trade, and still use it for any scraping or scripting task that deals with traditional MySQL-style data. I also use PHP for bulk data processing and to build custom endpoints. It’s boring and it’s necessary.

Spatial Analysis

As I’ve repeated to everyone who will listen to me, there are three types of analysis that tend to be brought to bear on digital humanities projects: Spatial Analysis, Text Analysis and Network Analysis. For the first, I still lean on ArcGIS for most of my geoprocessing and map-making, though I’ve started to use QGIS more lately, especially since it’s so well-suited for producing data amenable to being fed into Drupal. ArcPy is too seductively easy for me, though the smart people I know who do this work with more skill and frequency than I are much more invested in other Python geo-processing packages.

Javascript Visualization

The future is finally here. I was one of the loudest Actionscript3 supporters but even I have to admit that libraries like paper.js and D3 are now so feature-rich that there’s no reason to create an rich Internet page that can’t be properly displayed on an iPad. Tools like the gexfjs viewer allow me to embed interactive network visualizations in a page in five minutes.

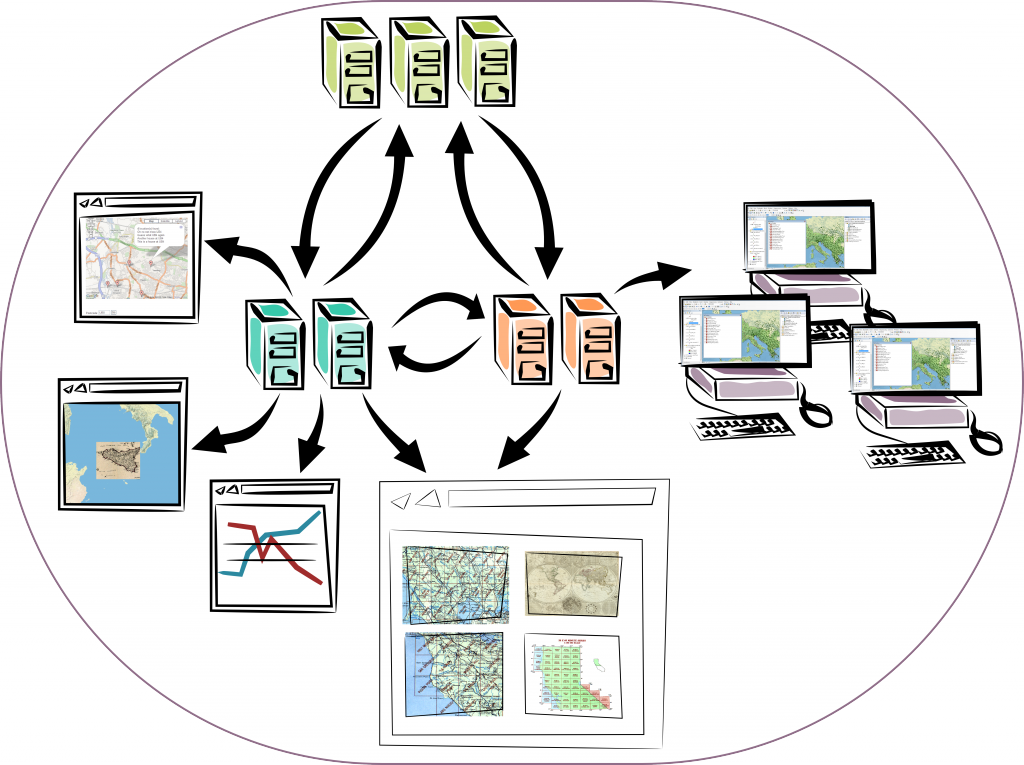

PostGIS/Geoserver

The use of Geoserver and PostGIS for hosting and processing spatial data was something I’d tested out in the Summer of 2011, and it’s worked out remarkably well. PostGIS2 seems to be making a good case for the unification of network and spatial analysis, as well as a possible way to store 3D objects. Anyone can tell you that playing with new technologies and even integrating them into small-scale projects does not require the kind of skill and investment that comes into making them available in a production environment for a large audience, which is one of my goals for 2012.

Social Media

While I’m on Google+, I’ve also spent some time studying social media like Twitter, Wikipedia and TV Tropes. Besides understanding the structure of these projects, I’m also interested in integrating social media knowledge into digital humanities projects.

Gephi

The main reason I updated this page is because it makes no mention of the tool that’s come to define my public presence in digital humanities scholarship. While I don’t actually do everything in Gephi, I find it’s been one of the most useful analysis packages available. I’ve started coding in Java to create network animations using the Gephi Toolkit as well as create new analytical tools.